Fact-checked by the VisualeNews editorial team

Quick Answer

Edge computing processes data near its source — on local devices or nearby servers — rather than sending it to a distant cloud data center. As of July 2025, the global edge computing market is valued at over $61 billion and is projected to reach $378 billion by 2030. It reduces latency, cuts bandwidth costs, and enables real-time decisions in applications like autonomous vehicles, smart factories, and financial systems.

Edge computing explained simply: it is a distributed computing model that moves data processing closer to where data is generated, rather than routing everything through a centralized cloud. According to Statista’s 2024 market data, the edge computing market is expanding at a compound annual growth rate of 37.9%, driven by the explosive growth of connected devices and demand for near-instant response times.

Understanding how edge computing works matters now because it is actively reshaping how businesses handle costs, security, and digital infrastructure — and those changes flow directly into the products and services consumers pay for. This guide covers what edge computing is, how it works, how it compares to cloud computing, and why it has real financial implications for businesses and households.

Key Takeaways

- The global edge computing market exceeded $61 billion in 2024 and is forecast to grow nearly sixfold by 2030, according to MarketsandMarkets research.

- Edge computing reduces data transmission latency to as low as 1 millisecond in optimized deployments, compared to 30–100 ms for typical cloud round-trips, per Cisco IoT infrastructure documentation.

- There are an estimated 75 billion IoT devices expected to be connected globally by 2025, all generating data that edge infrastructure is designed to handle, according to Statista’s IoT forecast.

- Businesses using edge computing report bandwidth cost reductions of up to 40% by processing data locally rather than uploading it to cloud servers, per IDC industry analysis.

- Major technology companies including Amazon Web Services, Microsoft Azure, and Google Cloud have all launched dedicated edge computing platforms, signaling the technology’s shift from experimental to mainstream infrastructure.

In This Guide

- What Is Edge Computing and Why Does It Exist?

- How Does Edge Computing Actually Work?

- How Does Edge Computing Differ From Cloud Computing?

- Where Is Edge Computing Being Used Right Now?

- What Are the Financial Implications of Edge Computing?

- How Does Edge Computing Affect Security and Privacy?

- What Does the Future of Edge Computing Look Like?

What Is Edge Computing and Why Does It Exist?

Edge computing is a distributed IT architecture that processes data at or near the point where it is created, rather than sending it to a centralized data center. The term “edge” refers to the geographic edge of a network — closer to the user or device generating data.

The concept emerged as a direct response to the limitations of centralized cloud computing. As billions of devices began generating continuous streams of data, routing all of it back to distant servers became impractical, expensive, and slow.

The Problem That Created Edge Computing

Traditional cloud architectures require data to travel from a device to a data center and back before any action is taken. For a self-driving car or a hospital patient monitor, even a 50-millisecond delay can be unacceptable.

The rise of the Internet of Things (IoT) — sensors, cameras, industrial machines, and connected consumer devices — made the bandwidth and latency problem acute. According to Statista’s global IoT projections, the number of connected devices is expected to surpass 75 billion by 2025. Sending that volume of data to central servers is neither efficient nor cost-effective.

A single autonomous vehicle can generate up to 4 terabytes of data per day from its sensors and cameras. Uploading all of that to the cloud in real time is physically impossible — edge computing is the only viable solution for processing it on the fly.

How Does Edge Computing Actually Work?

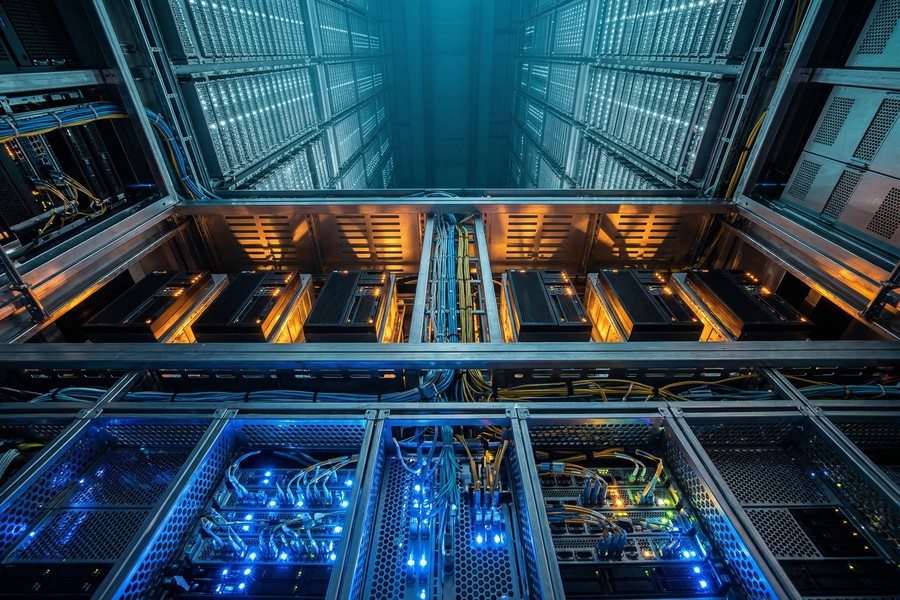

Edge computing explained at a technical level means deploying compute power — processors, storage, and networking — into physical hardware placed close to data sources. Instead of one central brain, there are many smaller processing nodes distributed across a network.

These nodes are called edge nodes or edge servers. They can be embedded in devices themselves (on-device edge), located in a nearby facility like a telecom hub (near-edge), or positioned in a regional micro data center (far-edge).

The Three-Layer Architecture

Most edge computing deployments follow a three-tier structure: the device layer, the edge layer, and the cloud layer.

- Device layer: Sensors, cameras, and endpoints that generate raw data.

- Edge layer: Local servers or gateways that filter, process, and act on data in real time.

- Cloud layer: Central servers that receive only summarized, relevant, or archival data from the edge.

This tiered model means the vast majority of routine processing happens locally. Only data that requires deep analysis, long-term storage, or cross-system coordination gets sent to the cloud. The result is faster responses, lower bandwidth consumption, and reduced cloud computing costs.

Key Hardware Components

Edge infrastructure relies on compact, rugged hardware. Common components include edge gateways, micro data centers, and purpose-built chips from companies like NVIDIA and Intel designed for local AI inference workloads.

5G networks are a critical enabler. The low latency and high bandwidth of 5G allow edge nodes to communicate with devices and with each other at speeds previously impossible on mobile networks. Telecom companies including Verizon and AT&T have made multi-edge computing (MEC) a core part of their 5G rollout strategies.

Edge computing deployments can reduce data transmission latency to as low as 1 millisecond in optimized 5G-connected environments, compared to the 30–100 millisecond round-trip typical of standard cloud connections. That difference determines whether real-time applications like autonomous driving or surgical robotics are safe to operate.

How Does Edge Computing Differ From Cloud Computing?

Edge computing and cloud computing are not competing technologies — they are complementary. The key difference is where processing occurs and how quickly results are needed.

Cloud computing centralizes processing in large-scale data centers operated by providers like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform. It excels at tasks requiring massive compute power, long-term storage, and global accessibility. Edge computing excels at tasks requiring speed, local autonomy, and bandwidth efficiency.

| Feature | Cloud Computing | Edge Computing |

|---|---|---|

| Processing Location | Centralized data centers | Local nodes near data source |

| Latency | 30–100 ms (typical) | 1–10 ms (optimized) |

| Bandwidth Cost | High (all data transmitted) | Lower (local filtering reduces upload) |

| Best Use Cases | Analytics, storage, AI training | Real-time decisions, IoT, automation |

| Offline Capability | No — requires connectivity | Yes — local processing continues |

| Infrastructure Cost | Lower upfront, pay-per-use | Higher upfront hardware investment |

The majority of enterprise deployments use a hybrid model: edge nodes handle time-sensitive tasks locally, while the cloud handles analytics, machine learning model training, and long-term data management. This is the architecture behind platforms like AWS Outposts and Microsoft Azure Edge Zones.

Where Is Edge Computing Being Used Right Now?

Edge computing is already operational across industries — it is not a future concept. Its most active deployments are in manufacturing, healthcare, retail, transportation, and financial services.

Industrial and Manufacturing Applications

Smart factories use edge computing to monitor equipment in real time and predict failures before they occur. Siemens and General Electric both deploy edge infrastructure on production floors where millisecond-level response times are required to prevent costly shutdowns.

According to IDC’s manufacturing technology research, manufacturers using edge-enabled predictive maintenance report equipment downtime reductions of up to 50%, translating directly to cost savings that affect product pricing.

Healthcare and Financial Services

In healthcare, edge devices process patient monitoring data locally inside hospitals, reducing the risk of a network outage cutting off life-critical alerts. Philips and GE Healthcare have embedded edge processing in their patient monitoring systems.

In financial services, edge computing is used for high-frequency trading, fraud detection, and ATM networks. Banks use local edge nodes to flag suspicious transactions in real time — without waiting for a cloud round-trip — helping protect consumer accounts faster. If you want to understand how technology is reshaping financial tools broadly, the article on how AI-powered budgeting apps are changing personal finance covers the consumer-facing side of this shift.

“Edge computing is not about replacing the cloud — it is about extending intelligence to where action needs to happen. The latency gap between a decision and its consequence is the entire value proposition.”

What Are the Financial Implications of Edge Computing?

Edge computing has direct financial consequences for businesses and indirect effects on consumers. Understanding them is part of being financially informed in a technology-driven economy.

For businesses, the upfront hardware investment is significant. But operational savings — particularly on cloud bandwidth bills — can be substantial. Companies that process data locally rather than uploading it to cloud servers can reduce bandwidth costs by up to 40%, according to IDC analysis. Over time, those savings compound.

How Edge Computing Costs Affect Consumer Prices

Retail companies using edge computing at checkout terminals and inventory systems reduce infrastructure costs that would otherwise be passed to consumers. Similarly, telecom providers investing in edge infrastructure for 5G may offer faster, more reliable services at competitive price points — or pass infrastructure costs into subscription fees.

For households managing technology expenses carefully, understanding what drives digital infrastructure costs is increasingly relevant. The way policy decisions show up in monthly bills often traces back to infrastructure investments like these. And for anyone auditing recurring tech costs, the guide on digital subscriptions that quietly drain your budget is worth reviewing — edge-enabled services are often billed as subscriptions.

When evaluating cloud-based software or IoT services for your home or business, ask whether the provider uses edge processing. Edge-enabled services typically offer better performance during internet outages, lower latency, and stronger data privacy — all of which affect the long-term value of what you are paying for.

Investment and Market Impact

The financial markets have taken notice. ARM Holdings, Qualcomm, and NVIDIA — all major edge chip manufacturers — have seen significant investor interest tied to edge computing growth projections. According to MarketsandMarkets forecasts, the sector is on track to reach $378 billion by 2030.

How Does Edge Computing Affect Security and Privacy?

Edge computing changes the security landscape in both beneficial and challenging ways. It reduces exposure in some areas while introducing new vulnerabilities in others.

On the positive side, processing sensitive data locally means less of it travels across public networks. For applications handling medical records, financial transactions, or personal biometrics, local processing reduces interception risk. Data that never leaves the building is data that cannot be intercepted in transit.

New Security Challenges at the Edge

However, distributing processing across hundreds or thousands of edge nodes dramatically expands the attack surface. Each node is a potential entry point. Unlike a centralized data center with a single, heavily guarded perimeter, edge deployments require securing many dispersed physical devices — some in publicly accessible locations like retail stores or streetlight poles.

The National Institute of Standards and Technology (NIST) has published guidance on securing IoT and edge devices, emphasizing device authentication, encrypted communication, and regular firmware updates as baseline requirements.

For consumers, the privacy angle is significant. Edge devices like smart home systems process audio and video locally rather than streaming it to corporate servers — which can mean greater privacy. But poorly secured edge devices can also be compromised to surveil users or hijack local networks. Understanding your digital identity and how to protect it is increasingly important as edge-connected devices multiply in homes.

What Does the Future of Edge Computing Look Like?

The trajectory of edge computing explained in one sentence: it will become invisible infrastructure, embedded in nearly every device and network within a decade. The technology is moving from specialized deployments to ubiquitous, default architecture.

The convergence of 5G, artificial intelligence, and edge computing is the defining infrastructure story of the late 2020s. AI models are increasingly being compressed and deployed directly on edge devices — a practice called on-device inference — removing the need to send queries to cloud AI servers at all.

Edge AI and the Next Generation of Applications

Companies like NVIDIA with its Jetson platform, Qualcomm with its AI inference chips, and Apple with its Neural Engine have already embedded AI processing at the device level. This is edge computing explained in consumer terms: when your phone processes a photo, translates speech, or detects your face — without needing an internet connection — that is edge AI in action.

According to Gartner’s technology definitions and forecasts, by 2025 75% of enterprise-generated data will be processed outside traditional centralized data centers — compared to just 10% in 2018. That shift represents a fundamental restructuring of how digital infrastructure works.

The financial and technological literacy needed to navigate this environment overlaps meaningfully. Just as AI is changing how we search the internet, edge computing is changing how the services we pay for are built and delivered. Being informed about these shifts helps consumers and investors make better decisions.

Frequently Asked Questions

What is edge computing explained in simple terms?

Edge computing means processing data close to where it is created instead of sending it to a distant server. Think of it as having a mini data center near your device rather than one far away. This makes digital services faster, cheaper to run, and more reliable when internet connections are poor.

What is the difference between edge computing and cloud computing?

Cloud computing centralizes all processing in remote data centers, while edge computing distributes processing to local nodes near data sources. They are not competing — most modern systems use both. The cloud handles complex analytics and storage; the edge handles real-time, latency-sensitive tasks.

Is edge computing the same as fog computing?

No, but they are related. Fog computing is a broader architecture defined by Cisco that includes edge computing as a subset. Fog computing refers to the entire distributed layer between devices and the cloud, while edge computing specifically refers to processing at or very near the device itself.

How does edge computing affect data privacy?

Edge computing can improve privacy by keeping sensitive data local rather than transmitting it to third-party cloud servers. Medical records, financial data, and biometrics processed on-site are not exposed to interception in transit. However, poorly secured edge devices can introduce new privacy risks if compromised.

What industries use edge computing the most?

Manufacturing, healthcare, retail, transportation, and financial services are the leading adopters as of 2025. Autonomous vehicles, smart factories, and real-time fraud detection are among the highest-value use cases. Telecom companies are also heavy users as they build out 5G multi-access edge computing (MEC) infrastructure.

Does edge computing replace the need for cloud computing?

No. Edge computing supplements the cloud rather than replacing it. Real-time processing, local autonomy, and bandwidth reduction are handled at the edge. Long-term storage, global data sharing, large-scale AI model training, and cross-system analytics remain best suited to centralized cloud infrastructure.

How does edge computing affect the cost of digital services?

Edge computing can reduce operational costs for service providers by cutting cloud bandwidth fees — savings that may be passed to consumers as lower prices or used to improve service reliability. However, upfront hardware investment is significant, and those costs can also appear in subscription pricing for edge-enabled products.

Sources

- Statista — Edge Computing Market Size and Growth Forecast

- MarketsandMarkets — Edge Computing Market Report 2024–2030

- Statista — Number of IoT Connected Devices Worldwide

- IDC — Edge Computing and Distributed Infrastructure Analysis

- Cisco — Internet of Things and Edge Infrastructure Overview

- Gartner — Edge Computing Definition and Market Forecast

- NIST — Cybersecurity for IoT and Edge Devices

- IBM — What Is Edge Computing? (Technology Overview)