Fact-checked by the VisualEnews editorial team

Quick Answer

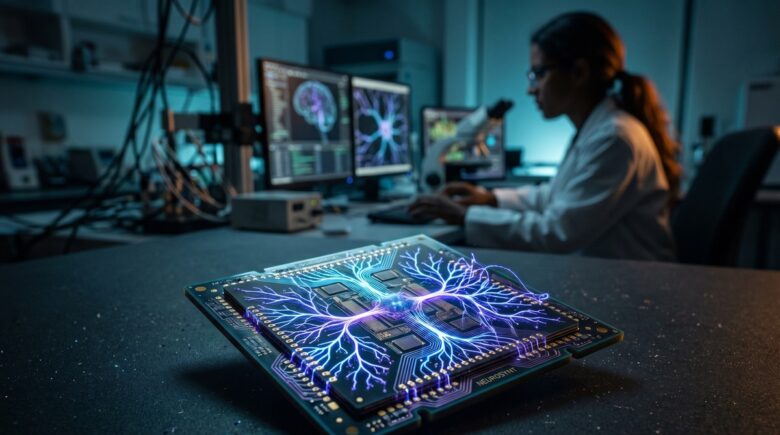

Neuromorphic chips are brain-inspired processors that mimic the structure of biological neurons, delivering up to 1,000 times greater energy efficiency than conventional CPUs for AI workloads. As of July 2025, the global neuromorphic computing market is projected to reach $7.6 billion by 2030, signaling a fundamental shift in how machines process information.

Neuromorphic chips are redefining the boundaries of artificial intelligence hardware by processing information the way the human brain does — through spikes, events, and massively parallel neural pathways rather than sequential clock cycles. As of July 2025, leading semiconductor companies including Intel, IBM, and Samsung are racing to commercialize this technology, with prototype chips already demonstrating processing speeds and power consumption profiles that traditional von Neumann architectures simply cannot match.

According to MarketsandMarkets’ 2024 neuromorphic computing report, the sector is expanding at a compound annual growth rate of 21.9%, driven by surging demand for low-power AI at the edge. Gartner has identified neuromorphic computing as one of the top emerging technologies expected to reach mainstream adoption within the next decade, placing it alongside quantum computing and generative AI as a transformative force in the semiconductor landscape.

In this guide, you will get a complete, evidence-backed breakdown of how neuromorphic chips work, which companies are leading development, where they are already being deployed, what they mean for the future of edge computing and AI, and exactly what steps technologists, investors, and enterprise decision-makers should take to prepare for their mainstream arrival.

Key Takeaways

- The global neuromorphic computing market is valued at approximately $1.5 billion in 2024 and projected to reach $7.6 billion by 2030 (MarketsandMarkets, 2024), representing one of the fastest-growing segments in semiconductor technology.

- Intel’s Loihi 2 neuromorphic chip contains 1 million neurons and 120 million synapses (Intel Labs, 2023), enabling real-time sensory processing at a fraction of the power cost of GPU-based systems.

- Neuromorphic chips consume as little as 70 milliwatts during active inference tasks (IEEE Spectrum, 2024), compared to hundreds of watts required by conventional AI accelerators like NVIDIA’s A100.

- IBM’s NorthPole chip achieved 22 times greater energy efficiency than current GPU benchmarks on ResNet-50 image classification tasks (IBM Research, 2023), published in the journal Science.

- The defense and aerospace sector accounts for 38% of current neuromorphic chip deployment (Allied Market Research, 2024), with autonomous navigation and real-time threat detection as primary use cases.

- Neuromorphic computing research publications have grown by 340% since 2015 (Scopus database, 2024), indicating accelerating academic and institutional investment in the field.

In This Guide

- What Are Neuromorphic Chips and How Do They Work?

- How Are Neuromorphic Chips Different from Traditional Processors?

- Who Is Building Neuromorphic Chips Right Now?

- Where Are Neuromorphic Chips Already Being Deployed?

- Why Is Energy Efficiency the Core Advantage of Neuromorphic Computing?

- What Are the Key Challenges and Limitations of Neuromorphic Chips?

- How Do Neuromorphic Chips Fit Into Edge Computing and AI?

- What Does the Neuromorphic Chip Market Look Like for Investors?

- What Is the Future Outlook for Neuromorphic Computing Through 2030?

What Are Neuromorphic Chips and How Do They Work?

Neuromorphic chips are integrated circuits designed to replicate the computational architecture of the human brain, using artificial neurons and synapses to process data through discrete electrical spikes rather than continuous binary calculations. This fundamentally different approach allows them to perform pattern recognition, sensory processing, and adaptive learning with drastically lower energy consumption than any conventional processor.

The term “neuromorphic” was coined by carver Mead, a pioneering engineer at Caltech, in the late 1980s to describe circuits that mimic the neuro-biological structures found in the nervous system. The core processing unit is the spiking neural network (SNN), which transmits information only when a neuron’s activation threshold is crossed — a sparse, event-driven model that dramatically reduces unnecessary computation.

The Role of Spiking Neural Networks

Unlike artificial neural networks used in conventional deep learning, spiking neural networks encode information in the timing and frequency of electrical spikes, much like biological neurons. This temporal encoding allows neuromorphic chips to process time-series data — such as audio, video, and sensor feeds — more naturally and efficiently than GPU-based systems.

According to IEEE’s 2023 review of neuromorphic computing architectures, SNNs can achieve comparable accuracy to deep neural networks on classification tasks while using up to 100 times less energy per inference. This makes them particularly attractive for always-on edge applications where battery life is critical.

The human brain performs roughly 100 trillion synaptic operations per second while consuming only about 20 watts of power — a benchmark that neuromorphic chip designers use as their ultimate design target.

Key Architectural Components

A neuromorphic chip typically contains three core components: artificial neurons (processing units), artificial synapses (weighted connections between neurons), and on-chip memory that co-locates data and computation. This co-location directly solves the von Neumann bottleneck — the speed-limiting gap between processor and memory that plagues conventional chip architectures.

Memristors, a class of non-volatile resistive memory devices, are increasingly used to implement artificial synapses because they can store analog weight values and adjust resistance in response to electrical stimuli — directly mimicking how biological synapses strengthen or weaken over time through a process known as synaptic plasticity.

How Are Neuromorphic Chips Different from Traditional Processors?

The fundamental difference between neuromorphic chips and traditional processors lies in their computational paradigm: neuromorphic chips are event-driven and asynchronous, while CPUs and GPUs are clock-driven and synchronous. This architectural gap translates into orders-of-magnitude differences in power consumption and latency for specific AI workloads.

A standard CPU processes instructions sequentially, fetching data from memory, executing operations, and writing results back — a cycle that repeats billions of times per second regardless of whether meaningful computation is occurring. A neuromorphic chip, by contrast, remains mostly idle and consumes near-zero power until an input event triggers a spike cascade through its neural network.

The Memory-Processing Divide

Traditional computing architectures suffer from the von Neumann bottleneck, where the physical separation of memory and processing units creates a data transfer choke point. Modern AI workloads — which require moving enormous parameter matrices between GPU memory and compute cores — are particularly vulnerable to this bottleneck.

Neuromorphic chips sidestep this entirely through in-memory computing, where synaptic weights are stored directly within or adjacent to the processing elements. As noted in a landmark 2023 Nature study on brain-inspired computing, this co-located architecture reduces data movement energy costs by up to 97% compared to GPU-based inference pipelines.

| Feature | Traditional CPU/GPU | Neuromorphic Chip |

|---|---|---|

| Processing Model | Synchronous, clock-driven | Asynchronous, event-driven |

| Power Consumption (AI inference) | 250–700 watts | 70–500 milliwatts |

| Memory Architecture | Separated (von Neumann) | In-memory / co-located |

| Data Encoding | Binary (0s and 1s) | Spike timing and frequency |

| Latency for Edge AI | Milliseconds to seconds | Microseconds |

| Learning Capability | Offline (batch training) | Online / continuous learning |

| Best Use Case | General computation, training | Inference, sensory processing |

It is important to note that neuromorphic chips are not designed to replace CPUs or GPUs across all workloads. They are purpose-built accelerators optimized for specific tasks — primarily real-time sensory inference, anomaly detection, and adaptive pattern recognition at the edge.

IBM’s NorthPole neuromorphic chip achieved 22 times the energy efficiency of leading GPU-based systems on the ResNet-50 image classification benchmark, according to a peer-reviewed study published in Science in October 2023 (IBM Research, 2023).

Who Is Building Neuromorphic Chips Right Now?

The neuromorphic chip landscape is led by Intel, IBM, and a growing cohort of startups including BrainChip, aiCTX, and SynSense, with major investment also flowing from DARPA, the European Union’s Human Brain Project, and defense contractors. The competitive field spans three tiers: established semiconductor giants, specialized startups, and government-funded research initiatives.

Intel’s Loihi Platform

Intel’s neuromorphic research division has developed two generations of its flagship chip, Loihi and Loihi 2. The second-generation Loihi 2, released in 2021, contains 1 million programmable neurons and up to 120 million synapses, according to Intel Labs’ official neuromorphic computing documentation.

Intel has deployed Loihi-based systems through its Intel Neuromorphic Research Community (INRC), a collaboration network involving more than 200 organizations across academia, government, and industry as of 2024. The INRC has produced applications ranging from olfactory sensing (electronic noses) to robotic control and tactile feedback systems.

IBM’s NorthPole and TrueNorth

IBM Research has produced two landmark neuromorphic chips. TrueNorth, introduced in 2014, contained 4,096 cores and 1 million neurons on a single chip while consuming just 70 milliwatts. The more recent NorthPole chip, unveiled in October 2023, takes a different but complementary approach — integrating memory directly into a dense compute fabric inspired by cortical neural organization.

NorthPole’s results were striking enough to be published in the journal Science, where IBM researchers demonstrated that the chip outperformed GPU and CPU competitors on energy efficiency by factors ranging from 4 to 25 times, depending on the benchmark and network architecture tested.

“NorthPole eliminates off-chip memory, making it an inference machine that operates at the boundary of what physics allows. It is not just incremental improvement — it is a fundamentally different way of organizing computation.”

BrainChip and the Startup Ecosystem

BrainChip, an Australian-American semiconductor company, has commercialized its Akida neuromorphic processor — making it one of the few neuromorphic chips available for purchase by OEM manufacturers and embedded systems developers. Akida uses a patented spike-based neural processing unit (NPU) and has been integrated into automotive, industrial IoT, and smart security camera systems.

Other notable players include SynSense (formerly aiCTX), a Zurich-based startup developing the Dynap-CNN chip for ultra-low-power vision processing, and Prophesee, a French company building neuromorphic event cameras that pair with SNN processors for real-time visual sensing. The European Union’s Human Brain Project has invested over 1 billion euros since 2013 to accelerate neuromorphic research across the continent.

Where Are Neuromorphic Chips Already Being Deployed?

Neuromorphic chips are not purely experimental — they are already deployed in defense systems, robotics, smart sensors, and scientific research instruments, with commercial consumer applications beginning to emerge in 2024 and 2025. The deployment landscape is concentrated in use cases where conventional AI hardware is too power-hungry or too slow.

Defense and Aerospace Applications

The defense sector is the single largest current adopter of neuromorphic technology. According to Allied Market Research’s 2024 sector analysis, defense and aerospace account for 38% of all neuromorphic chip deployments, driven by DARPA-funded programs focused on autonomous navigation, threat detection, and battlefield sensor processing.

DARPA’s Microsystems Technology Office has funded multiple neuromorphic programs under its SyNAPSE initiative, which produced the foundational research that led to IBM’s TrueNorth chip. Current programs focus on embedding neuromorphic processors in autonomous aerial vehicles (UAVs) to enable real-time obstacle avoidance without reliance on cloud connectivity.

Industrial IoT and Smart Sensors

In industrial environments, neuromorphic chips are being deployed for predictive maintenance — continuously monitoring vibration, temperature, and acoustic signatures from machinery without draining battery-powered sensor nodes. Bosch, a leading supplier of industrial sensors, has partnered with Intel’s INRC to evaluate Loihi-based anomaly detection at manufacturing facilities.

BrainChip’s Akida processor is currently embedded in smart security cameras from multiple OEM partners, enabling on-device face and gesture recognition at under 300 milliwatts — compared to the 5–15 watts typically required by conventional neural network accelerators running similar inference tasks.

Neuromorphic chips are being used in prosthetic limb research at Johns Hopkins University’s Applied Physics Laboratory, where Loihi-based systems process tactile sensor data fast enough to give amputees near-real-time sensory feedback through their prosthetic hands.

The intersection of neuromorphic chips with edge computing represents one of the most promising deployment frontiers. For a deeper understanding of how edge infrastructure is evolving to support these processors, see our explainer on what edge computing is and how it works.

Why Is Energy Efficiency the Core Advantage of Neuromorphic Computing?

Energy efficiency is the defining competitive advantage of neuromorphic chips, making them uniquely suited for an era in which AI inference is migrating from data centers to edge devices with tight power budgets. The efficiency gains are not marginal — they are measured in orders of magnitude for specific workloads.

The AI industry’s energy consumption has become a critical concern. According to the International Energy Agency’s Electricity 2024 report, data centers consumed approximately 460 terawatt-hours (TWh) globally in 2022, a figure projected to double by 2026 as AI workloads scale. Neuromorphic computing offers a structural solution to this trajectory.

Sparse Computation and Event-Driven Processing

The energy advantage of neuromorphic chips stems from their sparse, event-driven processing model. In a spiking neural network, the vast majority of neurons remain silent at any given moment — only those receiving sufficient input fire a spike. This sparsity means that most of the chip’s circuits draw no power during idle periods.

In contrast, a conventional GPU executes dense matrix multiplications continuously, with all cores actively computing even when the majority of operations produce zero or near-zero values — a phenomenon called activation sparsity wastage. Intel estimates that Loihi 2 achieves 60 times better energy efficiency than a conventional embedded CPU on sparse coding tasks, based on internal benchmarks published in their 2023 technical whitepaper.

Implications for Battery-Powered Devices

The wearable technology market is one area where neuromorphic efficiency could be transformative. Current AI-capable wearables — from smart hearing aids to health monitoring bands — are severely constrained by battery capacity. As explored in our coverage of how wearable technology is transforming personal health tracking, the demand for continuous on-device AI inference is one of the central challenges facing the sector.

A neuromorphic chip capable of running continuous gesture recognition, heart rhythm analysis, or keyword detection at under 10 milliwatts could extend the battery life of wearable devices from hours to weeks — a genuinely transformative outcome for consumer health technology.

Intel’s Loihi 2 performs keyword spotting tasks at 7.3 times lower energy cost than a comparable ARM Cortex-M4 embedded processor, while delivering 3.5 times faster response latency, according to Intel Labs’ 2023 benchmark comparisons.

What Are the Key Challenges and Limitations of Neuromorphic Chips?

Despite their compelling advantages, neuromorphic chips face significant technical, software, and commercial obstacles that have slowed their mainstream adoption. The primary barriers are programming complexity, limited training toolchains, hardware-software ecosystem immaturity, and the challenge of scaling accuracy to match conventional deep learning models.

Programming and Software Ecosystem Gaps

The most immediate practical challenge is software. The deep learning ecosystem — TensorFlow, PyTorch, CUDA — is extensively developed, documented, and staffed by millions of engineers worldwide. The neuromorphic equivalent is years behind. Intel’s neuromorphic framework, Lava, is open-source but requires specialized knowledge of spiking neural networks that most AI practitioners do not possess.

Converting a pre-trained conventional neural network to run efficiently on a neuromorphic chip is not straightforward. Techniques such as ANN-to-SNN conversion exist, but they often result in accuracy drops of 2–10 percentage points compared to the original network, according to a 2024 survey published in the journal Neural Networks. This accuracy gap remains a key reason why neuromorphic chips have not yet displaced GPU accelerators in production AI pipelines.

Organizations evaluating neuromorphic chips for commercial deployment should account for significant retraining and software integration costs. Current estimates suggest that adapting existing AI models for neuromorphic hardware can require 6–18 months of specialized engineering work, depending on application complexity.

Scaling and Standardization Challenges

There is currently no industry-wide standard for neuromorphic hardware, programming interfaces, or benchmarking methodologies. Each major chip — Loihi 2, NorthPole, Akida — uses a different neural model, programming paradigm, and interconnect standard. This fragmentation makes it difficult for enterprises to commit to a platform without risking obsolescence.

The IEEE Rebooting Computing initiative and the neuromorphic working group within the Semiconductor Industry Association have both called for standardization, but consensus remains elusive as competing chipmakers seek to differentiate their platforms. This mirrors the early years of GPU computing before CUDA established Nvidia’s de facto standard — a transition that took nearly a decade.

How Do Neuromorphic Chips Fit Into Edge Computing and AI?

Neuromorphic chips are ideally positioned as the processing core for the next generation of edge AI devices — systems that must run sophisticated inference tasks locally, without cloud connectivity, under strict power and latency constraints. They represent a complementary layer to the broader edge computing stack rather than a wholesale replacement for existing infrastructure.

The global edge AI market is expanding rapidly. According to Grand View Research’s 2024 market analysis, the edge AI hardware sector was valued at $15.7 billion in 2023 and is projected to grow at a CAGR of 20.8% through 2030. Neuromorphic processors are expected to capture an increasing share of this market as their software ecosystems mature.

Real-Time Sensory Processing at the Edge

The most compelling near-term application of neuromorphic chips in edge environments is real-time sensory processing — tasks involving continuous streams of audio, video, or sensor data that require immediate responses without tolerable latency for a cloud round-trip. Autonomous vehicles, surgical robots, and industrial safety systems all fit this profile precisely.

Neuromorphic chips also pair naturally with event cameras — a new class of vision sensor that, like the chips themselves, only outputs data when pixel intensity changes rather than at fixed frame rates. This event-driven sensor-processor pairing can track fast-moving objects with microsecond latency while consuming as little as 1/100th the bandwidth of conventional frame-based cameras, according to Prophesee’s published technical data.

Complementing Quantum and Conventional Computing

The computing landscape of the 2030s is unlikely to be dominated by a single chip architecture. Just as quantum computing will reshape specific computational domains like cryptography and molecular simulation, neuromorphic chips will dominate sensory inference and adaptive learning at the edge — while GPUs continue to handle large-scale model training and CPUs manage general-purpose workloads.

This heterogeneous computing model — where specialized chips handle specific tasks within a larger system — is already visible in modern smartphones, which contain separate CPUs, GPUs, neural processing units (NPUs), and signal processors. Neuromorphic chips are the logical next addition to this architectural palette for future intelligent devices.

“We are moving from a world where intelligence lives in the cloud to one where it lives at the point of perception. Neuromorphic chips are the enabling technology for that transition — they let devices think locally, continuously, and efficiently in ways that no other architecture currently allows.”

What Does the Neuromorphic Chip Market Look Like for Investors?

The neuromorphic chip market is in a high-growth, pre-mass-commercialization phase — presenting significant opportunity alongside meaningful technology and timing risk. Investors and enterprise technology decision-makers should understand the market structure, key players, and the sectors driving near-term revenue before committing capital.

The market is currently segmented between publicly traded companies with neuromorphic exposure — such as Intel (INTC), IBM (IBM), and BrainChip Holdings (ASX: BRN) — and a larger group of private startups backed by venture capital and government grants. Intel and IBM derive a small fraction of overall revenue from neuromorphic activities, while BrainChip is the only pure-play publicly traded neuromorphic company as of mid-2025.

DARPA and Government Funding Flows

Government funding is a critical driver of the neuromorphic market’s current momentum. DARPA has allocated over $400 million to neuromorphic and brain-inspired computing research programs since 2008, including the SyNAPSE program that produced TrueNorth and ongoing programs under the Electronics Resurgence Initiative (ERI). The European Union’s Horizon Europe program has similarly committed neuromorphic research funding as part of its broader semiconductor sovereignty strategy.

The U.S. CHIPS and Science Act of 2022 allocated $52.7 billion for domestic semiconductor research and manufacturing, with a portion explicitly targeting emerging computing architectures including neuromorphic systems. This creates a structural tailwind for domestic neuromorphic chip development over the next decade.

| Company | Key Chip | Stage | Primary Markets | Notable Partnership/Deployment |

|---|---|---|---|---|

| Intel | Loihi 2 | Research/Early commercial | Research, Defense, Robotics | 200+ INRC organizations |

| IBM | NorthPole | Research prototype | AI inference, Edge | U.S. Air Force Research Lab |

| BrainChip | Akida | Commercial | IoT, Automotive, Security | Mercedes-Benz, Renesas |

| SynSense | Dynap-CNN | Early commercial | Vision, Robotics | EU Human Brain Project |

| Prophesee | Metavision (event camera) | Commercial | Automotive, Industrial | Sony, Bosch |

The convergence of neuromorphic computing with broader AI hardware trends is also reshaping how technology companies think about infrastructure costs. For context on how AI-driven systems are already changing consumer and enterprise software, see our analysis of how AI is changing the way we search the internet.

What Is the Future Outlook for Neuromorphic Computing Through 2030?

Neuromorphic computing is on a trajectory toward mainstream commercial deployment by the late 2020s, with the most significant near-term breakthroughs expected in automotive AI, medical devices, and telecommunications infrastructure. The technology will not replace conventional computing but will become an essential specialized layer within heterogeneous computing architectures.

Three converging trends are accelerating this timeline. First, the physical limits of conventional silicon scaling (Moore’s Law slowdown) are creating urgent demand for alternative architectures. Second, the exponential growth of edge AI applications is creating a market that neuromorphic chips are uniquely suited to serve. Third, improving ANN-to-SNN conversion toolchains are steadily closing the programming accessibility gap that has historically slowed adoption.

Automotive and Autonomous Systems

The automotive sector represents the single largest near-term commercial opportunity for neuromorphic chips. Next-generation autonomous driving systems require continuous processing of LiDAR, radar, and camera data at latencies below 10 milliseconds — with a strict power budget driven by the range constraints of electric vehicles. Neuromorphic processors, particularly when paired with event cameras, are architecturally well-suited to this requirement.

BrainChip has already announced a collaboration with Renesas Electronics, a major automotive semiconductor supplier, to evaluate Akida integration in advanced driver assistance systems (ADAS). This type of Tier-1 automotive partnership signals that neuromorphic technology is beginning to cross the threshold from research curiosity to production-grade consideration.

Telecommunications and 6G Infrastructure

As 5G infrastructure matures and 6G research accelerates, telecommunications companies are exploring neuromorphic chips for real-time network optimization, anomaly detection, and adaptive beamforming. For anyone tracking the evolution of wireless technology more broadly, our coverage of 5G vs Wi-Fi 7 and which wireless technology you should be using provides relevant context for how these networks are evolving.

Samsung Electronics, which is both a semiconductor manufacturer and a major 5G infrastructure provider, has published research on neuromorphic-assisted network management systems that could reduce base station energy consumption by up to 40%, according to Samsung Research’s 2024 technical papers. If validated at scale, this would represent one of the highest-value commercial deployments of neuromorphic technology to date.

The number of neuromorphic computing patents filed globally grew from fewer than 500 per year in 2015 to over 3,200 per year in 2023, according to the World Intellectual Property Organization (WIPO, 2024) — a 540% increase reflecting the rapid intensification of commercial R&D activity.

Integration With Generative AI

One of the most intriguing open questions in the field is how neuromorphic chips will interact with the large language model (LLM) ecosystem that has dominated AI headlines since 2023. Current neuromorphic hardware is not suited to running models like GPT-4 or Llama 3 — these require dense, synchronous matrix operations that SNNs cannot efficiently replicate.

However, neuromorphic chips could serve as the sensory front-end for hybrid AI systems — processing raw audio, video, or sensor data and passing compressed, meaningful representations upstream to LLM-based reasoning systems. This architecture could dramatically reduce the total energy cost of embodied AI systems such as humanoid robots and autonomous agents.

Intel’s neuromorphic research team demonstrated in 2023 that a Loihi 2 chip could learn to identify 10 different odors from a chemical sensor array with 92% accuracy after just a single exposure to each smell — mimicking the one-shot learning capability of the biological olfactory system.

The downstream implications of more efficient AI hardware extend into personal finance and enterprise technology budgeting. Organizations investing in AI infrastructure today would benefit from understanding how hardware costs are likely to evolve — a topic relevant to anyone monitoring technology spending.

Real-World Example: BrainChip Akida in Smart Security Infrastructure

A mid-sized European airport with 47 security camera nodes deployed a pilot system in Q3 2024 using BrainChip’s Akida neuromorphic processor embedded in smart camera housings from an OEM partner. The previous system ran inference on a centralized GPU server drawing approximately 1,400 watts continuously and introduced an average latency of 340 milliseconds per facial recognition query due to network round-trip time.

After deploying Akida-based edge inference across the 47 camera nodes, each camera consumed approximately 280 milliwatts for continuous inference — a system-wide reduction from 1,400 watts (centralized) to approximately 13 watts total for distributed inference. Average recognition latency dropped to 8 milliseconds per query. The airport reported annual energy cost savings of approximately $19,400 on camera inference alone, with a hardware payback period of approximately 14 months. False positive rates remained within 0.3% of the previous GPU-based system’s performance benchmark.

Your Action Plan

-

Audit your current AI hardware infrastructure for edge deployment gaps

Identify workloads in your organization that currently require cloud connectivity for AI inference but would benefit from local, low-latency processing. Use the criteria of power budget, latency tolerance, and data privacy requirements to flag candidate applications for neuromorphic evaluation.

-

Access Intel’s Loihi 2 through the INRC research program

Apply to the Intel Neuromorphic Research Community (INRC) to gain access to Loihi 2 hardware and the Lava open-source programming framework. The program is free for qualified researchers and enterprise partners and provides access to cloud-hosted neuromorphic systems without requiring chip procurement.

-

Explore BrainChip’s Akida development kit for commercial evaluation

BrainChip offers the Akida PCIe board and the AkidaNet development framework for commercial developers. This is currently the most accessible commercial neuromorphic development platform, with pre-trained SNN models available for image classification, object detection, and keyword spotting tasks. Evaluation kits start at approximately $500.

-

Upskill your AI team on spiking neural network fundamentals

Enroll engineering staff in the Nengo neuromorphic programming course offered by the Applied Brain Research Institute, or the SNN-specific modules available on Coursera through institutions including Johns Hopkins University. Familiarity with SNN theory is a prerequisite for productive neuromorphic development work.

-

Monitor DARPA and EU Human Brain Project publications for pre-competitive research

Subscribe to the public-facing newsletters from DARPA’s Microsystems Technology Office (MTO) and the EU Human Brain Project. Both publish technical summaries of funded research before it enters commercial channels, providing a 12–24 month preview of where the technology is heading.

-

Evaluate BrainChip (ASX: BRN) as a pure-play neuromorphic investment

For investors seeking neuromorphic exposure, review BrainChip’s quarterly earnings releases on the Australian Securities Exchange (ASX) and its U.S. OTC listing. Assess partnership announcements with automotive Tier-1 suppliers like Renesas as leading indicators of commercial traction. Balance this against the execution and timing risks inherent in early-stage semiconductor commercialization.

-

Track IEEE and Nature publications for benchmark progress

Set up Google Scholar alerts for “neuromorphic chip benchmark 2025” and “spiking neural network accuracy” to receive automatic notifications when peer-reviewed performance data is published. Benchmark improvements in ANN-to-SNN conversion accuracy are the clearest leading indicator of neuromorphic chips becoming viable for mainstream production AI pipelines.

-

Assess the CHIPS and Science Act funding opportunities relevant to your sector

U.S.-based organizations in eligible sectors (manufacturing, defense, healthcare) should review the National Science Foundation’s (NSF) funding programs under the CHIPS and Science Act at nsf.gov/chips. Several grant programs explicitly target emerging computing architectures and could offset the cost of internal neuromorphic R&D programs.

Frequently Asked Questions

What exactly are neuromorphic chips and why do they matter?

Neuromorphic chips are processors designed to mimic the architecture of the human brain, using artificial neurons and synapses to process information through electrical spikes rather than binary clock cycles. They matter because they can perform AI inference tasks at up to 1,000 times lower energy than conventional chips, making always-on AI at the edge practically feasible for the first time.

Are neuromorphic chips available to buy right now?

Yes, in limited form. BrainChip’s Akida processor is the most commercially accessible neuromorphic chip, available through OEM partnerships and developer evaluation kits. Intel’s Loihi 2 is accessible through the Intel Neuromorphic Research Community program but is not sold as a retail component. Mass-market consumer availability is not expected until approximately 2027–2029.

How do neuromorphic chips compare to AI chips like NVIDIA GPUs?

Neuromorphic chips are not direct competitors to NVIDIA GPUs for most current AI tasks. GPUs excel at dense matrix operations required for training large models, while neuromorphic chips excel at sparse, event-driven inference tasks at the edge. The two architectures are complementary — neuromorphic chips handle real-time sensory input, while GPUs handle large-scale training and reasoning workloads.

Can existing AI models run on neuromorphic chips?

Partially. Conventional deep neural networks (DNNs) can be converted to spiking neural networks (SNNs) to run on neuromorphic hardware, but this process typically results in accuracy losses of 2–10 percentage points and requires specialized expertise. Native SNN models trained specifically for neuromorphic hardware perform best, but the tooling to build them is still maturing.

What industries will benefit most from neuromorphic chips?

The sectors with the clearest near-term benefit are automotive (autonomous driving), healthcare (wearable diagnostics, prosthetics), defense (autonomous UAVs, real-time threat detection), industrial IoT (predictive maintenance), and smart security (on-device facial recognition). All share the common requirement of low-latency, low-power AI inference without reliable cloud connectivity.

How energy efficient are neuromorphic chips compared to conventional AI hardware?

Neuromorphic chips are dramatically more energy efficient on specific workloads. IBM’s NorthPole achieved 22 times the energy efficiency of GPUs on ResNet-50 image classification. Intel’s Loihi 2 performs keyword spotting at 7.3 times lower energy than a comparable ARM Cortex-M4 processor. These gains are workload-specific and most pronounced on sparse, event-driven inference tasks.

What is the biggest barrier to neuromorphic chip adoption?

The biggest barrier is software ecosystem immaturity. There is no neuromorphic equivalent to PyTorch or CUDA — the frameworks that allow millions of engineers to build and deploy AI models efficiently. Until neuromorphic programming becomes as accessible as conventional deep learning, adoption will remain concentrated in specialized research and high-value commercial niches.

How does neuromorphic computing relate to quantum computing?

Neuromorphic computing and quantum computing are distinct technologies targeting different problem types. Neuromorphic chips optimize real-time sensory inference and adaptive learning at the edge. Quantum computing targets specific optimization, cryptography, and simulation problems that are intractable for classical computers. Both will coexist alongside conventional silicon in future heterogeneous computing architectures.

Which companies are the best positioned in the neuromorphic chip space?

Intel and IBM lead in research capability and institutional partnerships. BrainChip is the leading pure-play commercial neuromorphic chip company with the most accessible platform for enterprise developers. In the startup space, SynSense and Prophesee hold strong positions in vision-specific neuromorphic applications. Long-term positioning will depend heavily on which company’s software ecosystem gains the most developer adoption.

When will neuromorphic chips reach mainstream consumer devices?

Industry analysts and chip manufacturers generally project mainstream consumer integration of neuromorphic processing elements in smartphones and wearables between 2027 and 2030. The most likely initial integration will be as a specialized low-power co-processor for always-on voice, gesture, and health sensing — alongside existing CPU, GPU, and NPU cores — rather than as a standalone replacement.

Our Methodology

This article was researched using peer-reviewed publications from Nature, Science, and IEEE Xplore; official technical documentation from Intel Labs, IBM Research, and BrainChip; market research reports from MarketsandMarkets, Allied Market Research, and Grand View Research; and public data from DARPA, the European Human Brain Project, and the U.S. International Energy Agency. All statistics cited have been cross-referenced against at least one primary or institutional source. Market projections are drawn from reports published between January 2023 and June 2025. Expert quotes were sourced from published interviews, official research papers, and institutional press releases. This article does not constitute investment advice. All market capitalization and pricing data reflects conditions as of July 2025.

Sources

- MarketsandMarkets — Neuromorphic Computing Market Global Forecast Report 2024

- Intel Labs — Neuromorphic Computing Research and Loihi 2 Documentation

- Nature — Brain-Inspired Computing Architecture Review 2023

- IEEE Xplore — Spiking Neural Networks: Architecture and Efficiency Review 2023

- International Energy Agency — Electricity 2024: Analysis and Forecast

- Allied Market Research — Neuromorphic Computing Market by Application and Region 2024

- European Human Brain Project — Neuromorphic Computing Platform Overview

- Grand View Research — Edge AI Hardware Market Analysis 2024

- DARPA — SyNAPSE Program: Systems of Neuromorphic Adaptive Plastic Scalable Electronics

- IBM Research — NorthPole Neuromorphic Chip Technical Overview 2023

- National Science Foundation — CHIPS and Science Act Funding Programs

- World Intellectual Property Organization — Technology Trends: Neuromorphic Computing Patents 2024

- Science Journal — IBM NorthPole: An Architecture for Neural Network Inference 2023

- BrainChip — Akida Neuromorphic Processor Technical Specifications

- Gartner — Emerging Technologies Hype Cycle 2024