Fact-checked by the VisualEnews editorial team

Quick Answer

AI models are getting smaller and more efficient because advances in quantization, pruning, and knowledge distillation allow developers to compress model size by up to 90% with minimal accuracy loss. As of July 2025, leading compact models like Microsoft’s Phi-3 Mini deliver near-GPT-4-level performance using 3.8 billion parameters — compared to hundreds of billions in older frontier models — making on-device AI deployment practical and cost-effective.

The push toward smaller AI models efficiency is reshaping how artificial intelligence is built and deployed. Research from Stanford’s 2024 AI Index Report confirms that model performance per compute dollar has improved by more than 2x annually since 2020, driven directly by efficiency-focused architectures rather than raw scale alone.

This shift matters because it determines where AI runs — on your phone, in a hospital device, or at the network edge — and who can afford to use it. This guide explains the core techniques behind model compression, why major labs are prioritizing smaller models, and what it means for the future of AI on everyday devices.

Key Takeaways

- Model pruning can reduce neural network size by up to 90% with less than 1% accuracy loss, according to foundational NeurIPS research on network compression.

- Microsoft’s Phi-3 Mini, with just 3.8 billion parameters, benchmarks comparably to models ten times its size, as shown in Microsoft Azure’s Phi-3 technical overview.

- Running a large frontier model costs approximately $0.01–$0.06 per 1,000 tokens, while efficient smaller models can cut inference costs by 60–80%, per OpenAI’s published pricing data.

- Google’s Gemma 2 family, released in 2024, achieved state-of-the-art results at the 9 billion parameter scale, documented in Google’s official Gemma 2 announcement.

- The global edge AI market is projected to reach $107 billion by 2028, fueled in large part by demand for smaller, on-device models, according to MarketsandMarkets industry research.

In This Guide

- Why Are AI Models Getting Smaller?

- What Techniques Make AI Models More Efficient?

- Which Small AI Models Are Leading the Industry?

- How Do Smaller Models Reduce Cost and Energy Use?

- Why Does Smaller AI Models Efficiency Matter for On-Device Use?

- What Are the Tradeoffs of Using Smaller AI Models?

- What Is the Future of AI Model Efficiency?

Why Are AI Models Getting Smaller?

AI models are shrinking because scale alone proved unsustainable. Training GPT-3 cost an estimated $4.6 million in compute, according to Lambda Labs’ compute cost analysis — a figure that made broad deployment economically impossible for most organizations.

Researchers discovered that intelligent architecture design, better training data, and compression algorithms could match or exceed the performance of brute-force scaling. This realization redirected investment from building ever-larger models toward maximizing smaller AI models efficiency.

The Scaling Wall

The original assumption in deep learning was simple: more parameters meant better performance. That assumption held through GPT-2, GPT-3, and early large language models. But diminishing returns became evident as models crossed the 100-billion-parameter threshold.

DeepMind’s landmark Chinchilla scaling laws paper demonstrated in 2022 that most large models were actually undertrained relative to their size. Training a smaller model on more data consistently outperformed a larger model trained on less. This was a pivotal insight for the entire industry.

DeepMind’s Chinchilla model, with 70 billion parameters, outperformed OpenAI’s GPT-3 (175 billion parameters) on most language benchmarks — using less than half the compute to run inference.

What Techniques Make AI Models More Efficient?

Four core techniques drive smaller AI models efficiency: quantization, pruning, knowledge distillation, and mixture-of-experts (MoE) architecture. Each targets a different dimension of waste within a neural network.

Quantization and Pruning

Quantization reduces the numerical precision of model weights — for example, converting 32-bit floating-point numbers to 8-bit or even 4-bit integers. This can shrink model memory footprint by 75% with minimal impact on output quality, as documented in Hugging Face’s quantization integration guide.

Pruning removes weights that contribute least to model outputs. Think of it as trimming dead branches from a tree. Together, quantization and pruning are the fastest paths to smaller AI models efficiency without retraining from scratch.

Knowledge Distillation

Knowledge distillation trains a smaller “student” model to replicate the behavior of a larger “teacher” model. The student learns not just the correct answers but the probability distributions the teacher assigns to all possible answers — capturing nuance that raw training data alone cannot provide.

This technique is the backbone of models like DistilBERT from Hugging Face, which achieves 97% of BERT’s performance at 40% less compute, per the original DistilBERT research paper. Knowledge distillation is now standard practice across Meta AI, Google DeepMind, and Anthropic.

Which Small AI Models Are Leading the Industry?

Several compact models have set new benchmarks for smaller AI models efficiency in 2024 and 2025. Microsoft, Google, Meta, and Mistral AI all ship production-ready small models that outperform older, much larger systems.

Phi-3, Gemma, and Mistral

Microsoft’s Phi-3 Mini (3.8B parameters) and Phi-3 Small (7B parameters) score above GPT-3.5 on standard benchmarks like MMLU and HumanEval. Microsoft attributes this to high-quality, curated training data rather than scale, as explained in their Phi-3 technical blog post.

Google’s Gemma 2 (9B and 27B variants) and Meta’s Llama 3 family (8B and 70B) demonstrate that open-weight small models can match closed frontier systems on many tasks. Mistral 7B from Mistral AI remains one of the most widely deployed open models globally, favored for its speed and low memory requirements.

| Model | Parameters | Developer | MMLU Score | Inference Cost (per 1M tokens) |

|---|---|---|---|---|

| Phi-3 Mini | 3.8B | Microsoft | 68.8 | ~$0.10 |

| Gemma 2 9B | 9B | Google DeepMind | 71.3 | ~$0.20 |

| Mistral 7B | 7B | Mistral AI | 64.1 | ~$0.15 |

| Llama 3 8B | 8B | Meta AI | 66.6 | ~$0.10 |

| GPT-3.5 Turbo | ~175B | OpenAI | 70.0 | ~$0.50 |

“The era of scaling at all costs is over. The next frontier is doing more with less — and the models coming out of that philosophy are already competitive with systems that cost ten times as much to run.”

How Do Smaller Models Reduce Cost and Energy Use?

Smaller AI models efficiency translates directly into lower electricity consumption and infrastructure spending. Inference — running a model to generate responses — accounts for the majority of AI’s operational cost, not training.

Energy Consumption at Scale

A single query to a large language model consumes roughly 10x more energy than a standard Google search, according to the International Energy Agency’s 2024 Electricity report. At billions of daily queries, this adds up to a significant share of global data center power draw.

Compact models running on specialized hardware — such as NVIDIA’s Jetson platform or Apple’s Neural Engine — can process the same query using under 5 watts of power compared to over 300 watts for a data center GPU cluster. This is a critical advantage for both operators and enterprise AI applications.

Efficient small models can reduce AI inference energy consumption by up to 80% compared to frontier-scale models, according to estimates from the International Energy Agency. That translates to millions of dollars in annual savings for large-scale deployments.

For businesses running AI at scale, this efficiency gain is not abstract. A company processing 1 million AI queries per day could reduce monthly inference costs from roughly $15,000 to under $3,000 by switching from a frontier model to a well-matched compact alternative. This is also relevant to how AI-powered budgeting apps are becoming more accessible — smaller models make consumer-grade AI tools economically viable.

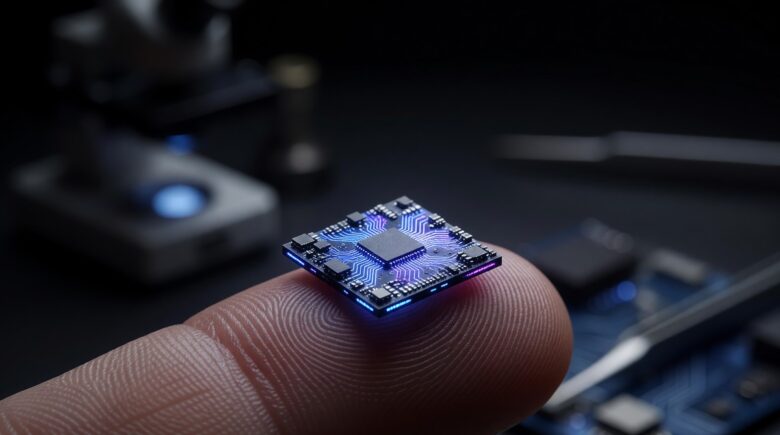

Why Does Smaller AI Models Efficiency Matter for On-Device Use?

On-device AI — running inference entirely on a smartphone, laptop, or wearable — is only possible because of gains in smaller AI models efficiency. Cloud-dependent AI introduces latency, privacy risks, and connectivity requirements that on-device deployment eliminates.

Privacy and Latency Advantages

When a model runs locally, user data never leaves the device. This is a significant regulatory and trust advantage, particularly under frameworks like the EU’s AI Act and the GDPR. Apple’s on-device AI features in iOS 18, branded as Apple Intelligence, rely entirely on compressed, on-device models for sensitive tasks.

Latency drops from hundreds of milliseconds for a cloud round-trip to under 50 milliseconds for on-device inference. For real-time applications like voice assistants, medical monitoring, or industrial automation, this difference is decisive. This connects directly to developments in edge computing, where processing power moves closer to the data source.

Impact on Wearables and Embedded Devices

Compact AI models are now embedded in devices with severe resource constraints — hearing aids, smartwatches, and industrial sensors. The advances in wearable technology for health tracking depend directly on models that fit within a few megabytes of memory while delivering real-time analysis.

Qualcomm’s Snapdragon X Elite chip and Apple’s M4 Neural Engine are both architected specifically to accelerate quantized small models. Hardware and software efficiency gains are compounding, making on-device AI a mainstream reality rather than a research concept.

Apple’s M4 Neural Engine can perform 38 trillion operations per second (TOPS), enabling full on-device inference for compact language models without a cloud connection — a 60% improvement over the M3 generation.

What Are the Tradeoffs of Using Smaller AI Models?

Smaller models are not universally superior. The core tradeoff is task complexity: compact models excel at well-defined, narrow tasks but can struggle with multi-step reasoning, long-context understanding, and highly specialized domains.

Context Windows and Reasoning Limits

Most small models support context windows of 4,096 to 32,768 tokens. Frontier models like GPT-4o support up to 128,000 tokens. For tasks involving long documents, legal contracts, or complex codebases, a larger model is often the right tool. The key is matching model capability to task requirements.

Hallucination rates — instances where a model generates plausible but false information — tend to be slightly higher in smaller models, particularly on low-frequency knowledge. Organizations deploying compact models for high-stakes applications like healthcare or legal analysis should implement retrieval-augmented generation (RAG) as a reliability layer. Understanding how AI is changing search provides useful context for how RAG architectures are being adopted at scale.

Fine-Tuning as a Mitigation Strategy

Domain-specific fine-tuning largely closes the performance gap for targeted applications. A Mistral 7B model fine-tuned on medical literature can outperform a general-purpose 70B model on clinical reasoning tasks. Fine-tuning also allows organizations to optimize for smaller AI models efficiency without sacrificing domain accuracy.

The combination of low-rank adaptation (LoRA) fine-tuning and quantization has become the industry-standard approach for deploying compact models in production. This keeps both training and inference costs low while maintaining task-specific performance.

Before selecting a model size, benchmark it specifically on your use case rather than relying on general leaderboard scores. A 7B parameter model fine-tuned on your domain data will frequently outperform a 70B general-purpose model — at a fraction of the inference cost.

What Is the Future of AI Model Efficiency?

The trajectory of smaller AI models efficiency points toward models that are simultaneously more capable and less resource-intensive. Several emerging techniques are accelerating this trend in 2025 and beyond.

Mixture-of-Experts and Sparse Architectures

Mixture-of-Experts (MoE) architectures activate only a small subset of model parameters for any given input. Google’s Gemini 1.5 and Mistral’s Mixtral 8x7B use MoE to deliver the effective capacity of a large dense model while running at the cost of a much smaller one. This is arguably the most significant architectural innovation since the transformer itself.

Sparse models and structured pruning will continue to push efficiency frontiers. Researchers at MIT and Carnegie Mellon University are exploring neuromorphic computing architectures that mimic biological neural efficiency — promising another order-of-magnitude improvement in energy use per inference operation.

Implications for Everyday Technology

As efficiency continues to improve, the distinction between “AI features” and “software features” will blur. Every application — from laptops for remote workers to next-generation wireless devices — will include embedded AI capabilities powered by efficient local models. The question will no longer be whether a device runs AI, but how well.

The competitive pressure from open-weight models released by Meta AI, Mistral AI, and Google DeepMind is also forcing the entire industry toward efficiency. When a free, open model matches a paid frontier model on most tasks, the market incentive to build smaller and smarter becomes overwhelming. Smaller AI models efficiency is not a temporary trend — it is the new baseline for how AI will be built.

“Efficiency is the new capability. A model that runs on your phone, respects your privacy, and responds in real time is more useful to most people than a model that requires a data center but scores two points higher on a benchmark.”

Frequently Asked Questions

What does “smaller AI model” actually mean?

A smaller AI model has fewer parameters — the numerical weights that define its behavior. Modern compact models typically range from 1 billion to 13 billion parameters, compared to hundreds of billions in frontier models. Fewer parameters mean less memory, faster inference, and lower energy use.

Can a small AI model be as good as a large one?

For specific, well-defined tasks, yes. Microsoft’s Phi-3 Mini (3.8B parameters) matches or exceeds GPT-3.5 on coding and reasoning benchmarks. For broad, open-ended tasks requiring wide world knowledge or very long context, larger models still hold an advantage.

What is quantization in AI?

Quantization reduces the numerical precision of a model’s weights — for example, from 32-bit to 4-bit integers. This can shrink model size by up to 75% with minimal accuracy loss. It is one of the most widely used techniques to achieve smaller AI models efficiency without full retraining.

Why are companies building smaller AI models now?

Cost, energy, and deployment constraints are the primary drivers. Running large models at scale is expensive and energy-intensive. Smaller models enable on-device deployment, faster inference, lower cloud costs, and compliance with data privacy regulations like the GDPR and the EU AI Act.

What is knowledge distillation in machine learning?

Knowledge distillation trains a compact “student” model to replicate the output behavior of a larger “teacher” model. The student absorbs not just correct answers but the teacher’s confidence distributions, resulting in a small model that punches well above its parameter weight.

Are smaller AI models better for privacy?

Yes, in most cases. On-device models process data locally, meaning sensitive information never leaves the user’s hardware. This is a core reason Apple, Google, and Qualcomm are investing heavily in hardware accelerators optimized for compact, efficient models.

What is the difference between model size and model performance?

Model size refers to parameter count; performance refers to accuracy and capability on specific tasks. These are not directly proportional. A well-trained 7B model on high-quality data routinely outperforms a poorly trained 70B model. Training data quality and architecture design matter as much as size.

Sources

- Stanford HAI — AI Index Report 2024

- DeepMind — Chinchilla Scaling Laws Paper (Hoffmann et al., 2022)

- Microsoft Azure — Introducing Phi-3: Redefining What’s Possible with SLMs

- Google — Gemma 2 Official Announcement

- Hugging Face — DistilBERT Research Paper (Sanh et al., 2019)

- International Energy Agency — Electricity 2024 Report

- Hugging Face — Making LLMs Lighter with Quantization

- OpenAI — API Pricing

- MarketsandMarkets — Edge AI Market Size and Forecast

- Lambda Labs — Demystifying GPT-3 Training Costs